Research Paper - (2018) Volume 8, Issue 4

Deficient Emotional Intelligence and Dysfunctional Early Emotional Prosody Processing Varying with the Severity of Auditory Hallucinations in Schizophrenics

- Corresponding Authors:

- Li-Fen Chen, PhD

Institute of Brain Science, National Yang-Ming University, No.155, Sec.2, Linong Street, Taipei, 112, Taiwan

Tel: +886-2-2826-7384 - Jen-Chuen Hsieh, MD, PhD

Institute of Brain Science, National Yang-Ming University, No.155, Sec.2, Linong Street

Taipei, 112, Integrated Brain Research Unit, Taipei Veterans General Hospital, No.201, Sect.2, Shih-Pai Rd. Taipei 112, Taiwan

Tel: +886-2-2826-7906

Abstract

Background

Most individuals with schizophrenia (SPs) experience auditory hallucinations (AH) of a negative or threat-related nature; however, the impact of AH on the early perceptual processing of emotions at the auditory cortex remains relatively unknown. In this paper, we employed an implicit emotional task to investigate the effects of AH on the early central processing of emotional prosody (within 100 milliseconds after stimulus onset) in SPs. We hypothesized that abnormalities in the emotional responses of SPs may vary with the severity of AH and may also be associated with impaired social cognition.

Methods and findings

A total of 63 SPs with AH of various severities and 21 age-matched control participants (CP) were recruited for this study. We assessed clinical symptoms and emotional intelligence (EI) as an index of social cognition. Auditory responses, including M50 and M100 components, to neutral and emotional prosody (happy, sad, angry, and fearful) were recorded using magneto encephalography. We then compared the groups in terms of the emotion-specific responseindex scores (normalized by the response to neutral sound) followed by correlation analysis with EI performance. Our results revealed that SPs have a delayed M50 response to the sad prosody; compared to CPs. SPs with ongoing AH invoked faster M50 responses to the angry prosody, compared to SPs without AH. In cases of persistent AH, we found that SPs with worse EI performance exhibited faster M50 responses to negative-laden prosody.

Conclusion

This study revealed that AH influence early emotional responses at the low level of auditory processing. Dysfunction in the processing of less emotionally salient stimuli (sadness) may be a trait-feature of schizophrenia. Nevertheless, a predilection toward negative emotions with high arousal in persistent AH may act as a state-feature incurring the impairment of social cognition. These findings provide insight into the neural mechanisms underlying distinct phenotypes of SPs (with or without AH).

Keywords

Schizophrenia, Emotional intelligence, Auditory hallucinations, Emotional prosody, Social cognition, Magnetoencephalography

Background

Approximately 70% of individuals with schizophrenia (SPs) experience auditory hallucinations (AH) [1], which are also referred to as “false perceptions” [2,3]. The fact that SPs tend to be biased toward negative emotions [4] often renders AH distressfully negative or threatrelated [5,6]. Furthermore, previous studies have consistently reported on abnormalities in the perception of emotions (e.g., emotional prosody processing) and affect recognition, which can have a negative bearing on social outcomes [7-10].

SPs are functionally associated with fundamental deficits in central auditory processing, such as P50 for sensory gating [11], N100 for early auditory encoding [11], P200 for selective attention [12], MMN for automatic pre-attentive processing [13,14], and P300 for later attention-dependent processing [15]. On one hand, SPs with AH present spontaneous hyperactivity in the auditory-perceptual system, which can interfere with auditory processing [16,17] and subsequently render the individual vulnerability to deficits in affect perception [18]. On the other hand, SPs exhibit pronounced higher-order functional deficits in social cognition [19,20].

One key element of normal social cognition is the integrity of emotional intelligence. Emotional intelligence (EI) refers to a set of measurable cognitive abilities, which serve as an index of the emotional component of social cognition [21-24]. Deficits in EI have been linked to the clinical symptoms of SPs as well as their daily functions and treatment outcomes [21,22,25,26]. It is plausible that interactions between abnormal sensory processes and/or deficiencies in affect recognition hinder the higher-order emotional cognition and emotional intelligence of SPs [12]. However, researchers have yet to elucidate the means by which AH interact with emotional prosody processing (particularly early perceptual processing) or how this could lead to deficits in EI.

In the current magnetoencephalography (MEG) study, we investigated the early central processing of various prosodic sounds (i.e., M50 and M100 components) among SPs presenting AH with various degrees of severity. We also examined the relationship between brain responses and EI performance. We hypothesized that 1) SPs have fundamental deficits in the early perceptual processing of emotion prosody (particularly negative ones); 2) Active AH in SPs influence the early perceptual processing of negative emotions; and 3) deficits in early emotional processing among SPs experiencing AH are correlated with impaired social cognition, as indexed by EI performance.

Materials and Methods

▪ Participants

The initial study cohort consisted of 98 participants (70 SPs); however, fourteen (7 SPs/7 control participants, CP) were excluded due to poor MEG signals resulting from metal dentures or because their heads did not fit the MEG helmet. Sixty-three SPs (mean age 37.37 ± 9.00, 33 males) were recruited from outpatient psychiatric clinics and daycare wards. Diagnoses were established in accordance with the Diagnostic and Statistical Manual of Mental Disorders, Fourth Edition, Text Revision, (DSM-IV-TR) [27]. The exclusion criteria were as follows: 1) comorbidity with a major medical condition; 2) pregnancy or postpartum psychosis; 3) substance abuse; 4) electro-convulsive therapy in the previous year; and 5) past history of head injury resulting in loss of consciousness. Most of the patients were consistently taking atypical antipsychotics (65/70) during the study. Twentyone aged-matched CPs (mean age 35.37 ± 5.80, 11 males) recruited through advertisements on academic bulletin boards were administered the Mini International Neuropsychiatric Interview to confirm that they did not have a history of major medical or neurological illness. The study protocol was approved by the Institutional Review Board of Taipei Veteran General Hospital and Tri-Service General Hospital, and written consent was obtained from each participant. Most of the participants (80/84) were right-handed, as assessed using the Edinburgh Handedness Inventory.

▪ Clinical evaluation and assessment of emotional intelligence

In all cases, the major DSM-IV Axis I diagnosis of schizophrenia was based on SCID-IV (the Structured Clinical Interview for DSM-IV Axis I Disorder). The severity of the clinical symptoms and AH was respectively assessed using the PANSS (Positive and Negative Syndrome Scale) [28] and PSYRATS-H (Psychotic Symptoms Rating Scales - Hallucination Subscale) [29] over the past one week before the MEG experiment. Based on the scores for the “frequency” item of the PSYRATS-H (range from 4 = most frequent to 0 = no AH), the SPs were divided into three subgroups: persistent AH (PH, with scores = 4 or 3), intermittent AH (IH, with scores = 2 or 1), and remitted/no AH (NH, with scores = 0). Most of those designated as NH (19/21) had previous experience of AH.

The assessment of EI was based on the MSCEIT-TC (Traditional Chinese version of the Mayer-Salovey-Caruso Emotional Intelligence Test), which was validated in a previous study [24]. In a previous study, our evaluation of MSCEIT-TC using confirmatory factor analysis revealed that the four-factor model (i.e., four branches) provided the best fit [24]. Thus, the measurements of the MSCEIT-TC in this study were arranged as four branches: B1) perceiving emotions; B2) the use of emotions to facilitate reasoning in cognitive tasks; B3) understanding complex emotions and how emotions change over time or in different situations; and B4) managing emotions by assessing one’s ability to intelligently apply emotional information to problem-solving [30,31].

▪ Stimuli and experimental paradigm

The sound stimuli used in this study was the monosyllabic sound “hey” pronounced with various emotions, including one neutral prosody (no emotion) and four emotional prosody (happy, sad, angry, and fearful), which were adopted from our previously developed dataset [32]. During the experiment, the participants were instructed to focus their attention on watching silent clips from a movie (Ratatouille) and to disregard the sound stimuli; i.e., emotion prosody was meant to be processed implicitly. The sound stimuli were binaurally delivered through plastic earphones (using the Presentation® program) at an intensity of 70- 75 dB in a pseudorandom order in which each neutral sound (50%) were followed by one of the four emotional sounds (50%). Each stimulus was presented for a period of 600 ms with a jittered stimulus onset asynchrony of 950–1050 ms. There were a total of 495 sound stimuli in each block of stimuli, the first 15 of which were neutral. Each participant was subjected to two blocks, with a short break of approximately one minute in between.

▪ MEG recording and data processing

MEG data were acquired using a whole head 306-channel neuromagnetometer system (Vectorview, Elekta-Neuromag, Helsinki, Finland) in a magnetically shielded room. Three fiducial landmarks (nasion and bilateral preauricular points) and four head position indicator coils were localized using a 3D digitizer system (Polhemus Navigation Science, Colchester, USA). Three fiducial points were used for the precise co-registration of the MEG and anatomical magnetic resonance (MR) imaging systems. Anatomical MR images were acquired using a 3T GE Discovery™ MR750 system equipped with an 8-channel phased-array head coil with a T1-weighted, magnetization prepared, 3D fast spoiled gradient-recalled echo sequence (repetition time = 8.22 ms, echo time = 3.24 ms, inversion time = 450 ms, flip angle = 12°, matrix size = 256 × 256 × 192, and voxel size = 0.9 × 0.9 × 0.9 mm3). For group comparisons, individual T1-weighted MR images were morphed into a standard stereotactic space (Montreal Neurological Institute) using FreeSurfer software [33]. The estimated deformation fields were then applied to the MEG data.

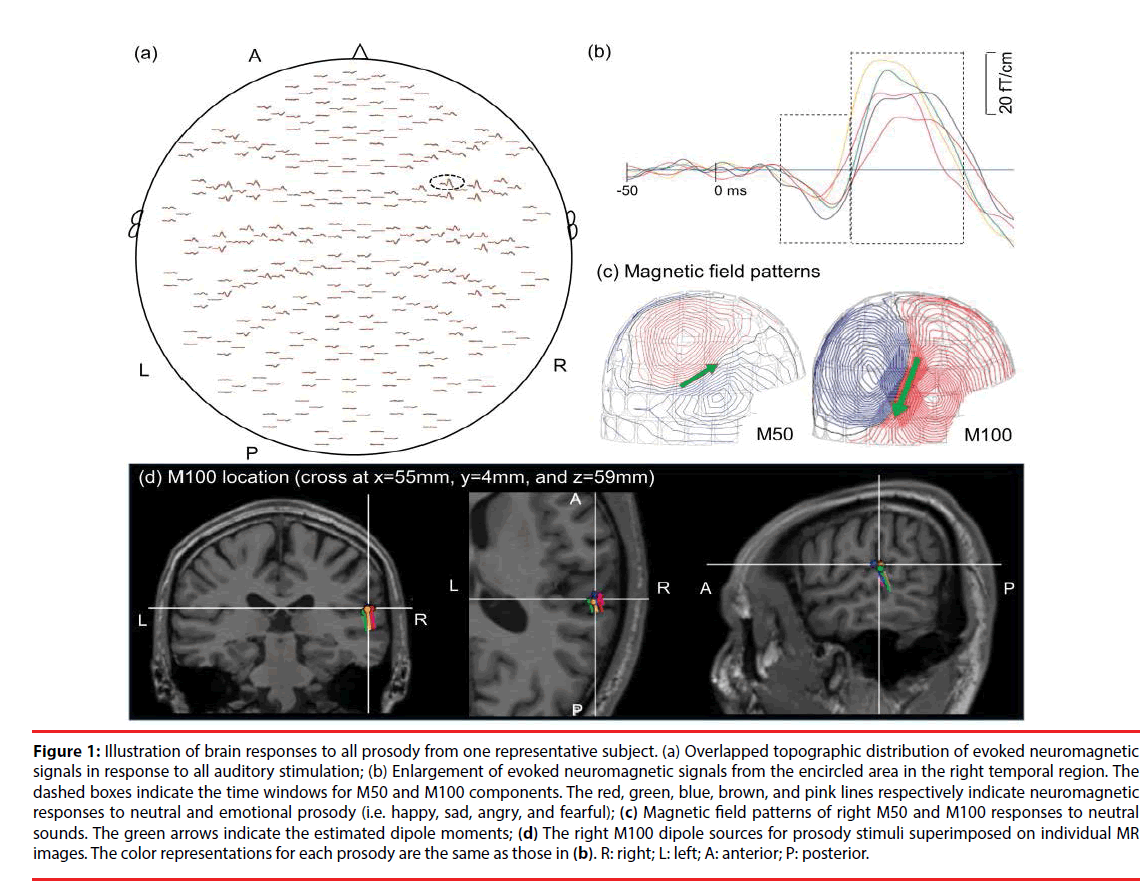

MEG signals were sampled at 1000 Hz and online band-pass filtered at 0.03-330 Hz. Epochs were extracted from 350 ms before to 500 ms following the onset of each sound stimulus. We discarded epochs contaminated by large eye-blink movements (electrooculographic amplitude >300 μV) or magnetic fields induced by background noise (>6000 fT/cm). The remaining epochs were visually inspected for additional artifacts, whereupon artifact-free epochs underwent band-pass filtering at 1-40 Hz. Approximately 90% of the trials for each sound stimulus were averaged (Figures 1A and 1B), and there was no differences in the number of trials per stimuli between groups (p>0.1). The interval between 350 ms and 50 ms prior to the onset of stimulus was used for baseline correction.

Figure 1: Illustration of brain responses to all prosody from one representative subject. (a) Overlapped topographic distribution of evoked neuromagnetic signals in response to all auditory stimulation; (b) Enlargement of evoked neuromagnetic signals from the encircled area in the right temporal region. The dashed boxes indicate the time windows for M50 and M100 components. The red, green, blue, brown, and pink lines respectively indicate neuromagnetic responses to neutral and emotional prosody (i.e. happy, sad, angry, and fearful); (c) Magnetic field patterns of right M50 and M100 responses to neutral sounds. The green arrows indicate the estimated dipole moments; (d) The right M100 dipole sources for prosody stimuli superimposed on individual MR images. The color representations for each prosody are the same as those in (b). R: right; L: left; A: anterior; P: posterior.

The cortical sources of the evoked responses were identified using the equivalent current dipole (ECD) model via Neuromag software [34] based on the least-squares method. The forward solution was calculated using a single layer boundary element model (BEM) [35] based on the inner surface of the skull (created by FreeSurfer). A two-dipole modeling approach (one for each hemisphere) was applied for the localization of neural responses to each sound at 40-80 ms (M50 component) and 81-150 ms (M100 component) following the onset of stimulus [36]. The initial location of each ECD was fit using 20-40 MEG channels around the auditory areas, based on 90% goodness-of-fit criteria. After identifying the initial ECD with the highest goodness-of-fit, all of the channels were taken into account in deriving the best explanation for the globally recorded magnetic field. Distinct dipolar polarity patterns of the M50 and M100 responses (anterior-superior versus posterior-inferior) (Figure 1C) were used to differentiate the sources of M50 responses from those of M100. The peak amplitude, latency, and location of each dipole source (Figure 1D) were estimated for these ECDs, as described in our previous study [37].

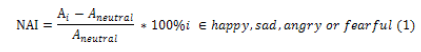

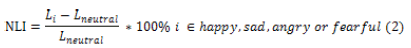

To formulate an index of brain responses to emotional sounds, we developed a novel procedure similar to the scheme used in studies on emotional prosody using BOLD contrast fMRI [38,39]. The normalized amplitude index (NAI) and normalized latency index (NLI) for M50 and M100 components were calculated as follows:

where A and L respectively represent the source peak amplitude and latency, and NAI and NLI respectively represent the strength and speed of emotion-specific brain responses conveyed by emotional prosody. The use of normalization based on responses to neutral sounds as a reference made it possible to conduct comparisons of emotion-specific responses between CPs and the schizophrenia groups as well as among the three subgroups of schizophrenia (e.g. PH, IH, and NH).

▪ Statistical analyses

Statistical analyses were conducted using IBM SPSS Statistics, version 21.0 (IBM Corp. Armonk, NY, USA). For all of the demographic data, clinical assessments, and brain responses, we used independent-samples t-tests for comparisons between the CP and schizophrenia groups, and one-way ANOVA with Tukey-HSD post hoc tests for within-group comparisons among the three schizophrenia subgroups. For NAI and NLI, non-parametric analysis was applied according to the results obtained from Levene’s test for homogeneity of variance and the Kolmogorov-Smirnov test for the normality of distribution. The Mann-Whitney U-test and Kruskal-Wallis test were respectively used for between-group and within-group comparisons, followed by Dunn’s post hoc test. Test statistics obtained from non-parametric tests were transformed into eta squared, and effect sizes were computed using a standardized measure (Cohen’s d) [40]. Spearman rank correlation was performed to investigate the relationships between EI scores (e.g., the scores of MSCEIT-TC) and the values of NAI and NLI for each emotional sound in each group.

Results

▪ Demographic and clinical information of participants

Table 1 lists the demographic data for the schizophrenia and CP groups. The SPs were divided into three subgroups (PH, IH, and NH) in accordance with the scores of PSYRATS-H. In terms of clinical symptoms, we obtained statistically significant differences in PANSS-positive (F(2,60) = 12.78, p<0.001, effect size = 1.16) and PANSS-total (F(2,60) = 3.27, p = 0.045, effect size = 0.49) between the three subgroups of schizophrenia. Tukey post hoc tests revealed that the PANSS-positive scores in the PH group were higher than those in the IH (p<0.001) and NH groups (p=0.042), and the PANSS-total scores in the PH group were higher than those in the NH group (p=0.042). The IH group achieved PANSS-positive scores higher than those in the NH group (p = 0.033). The range of mean PANSS-total scores was 62.7 to 73.5, indicating that the SPs were clinically stable.

| Group | Individuals with schizophrenia (SPs) | Within-group differences | CP | Between-group differences | ||

|---|---|---|---|---|---|---|

| PH | IH | NH | ||||

| Number | 21 | 21 | 21 | 21 | ||

| Age (in years) | 37.1 ± 7.1 | 38.4 ± 7.9 | 36.6 ± 12.0 | n.s. | 35.3 ± 5.8 | n.s. |

| Education(in years) | 13.4 ± 3.0 | 12.8 ± 2.6 | 13.4 ± 2.6 | n.s. | 15.9 ± 2.3 | CP>SPs** |

| Gender (F: M) | 12:9 | 12:9 | 9:12 | 11:10 | ||

| Married Status | 4/21 | 5/21 | 3/21 | 6/21 | ||

| DOI (years) | 13.7 ± 7.0 | 12.2 ± 6.9 | 12.3 ± 10.3 | n.s. | n.s. | |

| PANSS | ||||||

| PANSS_positive | 20.5 ± 4.2 | 17.1 ± 5.4 | 13.5 ± 3.7 | PH>NH**;IH>NH*;PH>IH* | ||

| PANSS_negative | 17.6 ± 5.8 | 19.2 ± 5.2 | 17.2 ± 6.4 | n.s. | ||

| PANSS_general | 35.4 ± 5.7 | 34.2 ± 7.5 | 31.9 ± 7.2 | n.s. | ||

| PANSS_total | 73.5 ±12.2 | 70.5 ± 15.8 | 62.7 ± 14.3 | PH>NH* | ||

| PANSS_depression | 9.0 ± 2.5 | 7.8 ± 2.2 | 7.1 ± 2.4 | n.s. | ||

| PSYRATS-H | 29.5 ± 5.5 | 20.3 ± 6.3 | 0 | PH>IH**;IH>NH**;PH>NH** | ||

| Data are presented as mean ± standard deviation. * p<0.05, ** p <0.001 for significant differences and n.s. indicates non-significant differences. Within-group differences among the three schizophrenia subgroups were evaluated using one-way ANOVA with Turkey-HSD post hoc comparisons. Between-group differences (SPs vs. CP) were evaluated using an independent paired T-test. PH: persistent hallucinating; IH: intermittent hallucinating; NH: non-hallucinating; CP: control participants; DOS: duration of illness. PANSS: positive and negative syndrome scale. PSYRATS-H: psychotic symptoms rating scale - hallucination subscale. |

||||||

Table 1: Demographic and clinical data of all participants.

▪ Evaluation of EI performance

Table 2 lists the MSCEIT-TC data used in the evaluation of EI performance in the schizophrenia and CP groups. The reported scores of MSCEIT-TC were rescaled from raw scores using a normreference method [24]. EI performance in the schizophrenia group was lower than in the CP group in all branches. No significant differences in EI performance were observed among the three subgroups of schizophrenia.

| Individuals with schizophrenia (SPs) | Within-group differences | CP | Between-group differences | |||

|---|---|---|---|---|---|---|

| PH | IH | NH | ||||

| Number | 21 | 21 | 21 | 21 | ||

| Branch1 (perceiving) | 92.4 ± 18.1 | 96.2 ± 18.4 | 87.1 ± 20.2 | n.s. | 107.2 ± 14.4 | CP>SPs** |

| Branch 2 (facilitating) | 97.1 ± 16.5 | 95.7 ± 23.3 | 93.5 ± 24.8 | n.s. | 104.3 ± 11.3 | CP>SPs** |

| Branch 3 (understanding) | 87.6 ± 25.3 | 89.5 ± 26.8 | 89.5 ± 24.5 | n.s. | 103.9 ± 11.1 | CP>SPs** |

| Branch 4 (managing) | 94.1 ± 21.0 | 91.2 ± 20.0 | 92.2 ± 17.0 | n.s. | 103.9 ± 12.0 | CP>SPs** |

| Total score | 92.8 ± 20.5 | 93.9 ± 22.2 | 89.8 ± 24.9 | n.s. | 105.5 ± 11.1 | CP>SPs** |

| Data are presented as mean ± standard deviation. * p<0.05, ** p <0.001 for significant differences; and n.s. indicates non-significant differences. Within-group differences among the three schizophrenia subgroups were evaluated using one-way ANOVA with Turkey-HSD post hoc comparisons. Between-group differences (SPs vs. CP) were evaluated using an independent paired T-test. PH: persistent hallucinating; IH: intermittent hallucinating; NH: non-hallucinating; CP: control participants; MSCEIT-TC: Traditional Chinese version of Mayer-Salovey-Caruso Emotional Intelligence Test. |

||||||

Table 2: MSCEIT-TC assessments for all participants.

▪ Source estimation of M50 and M100 brain responses

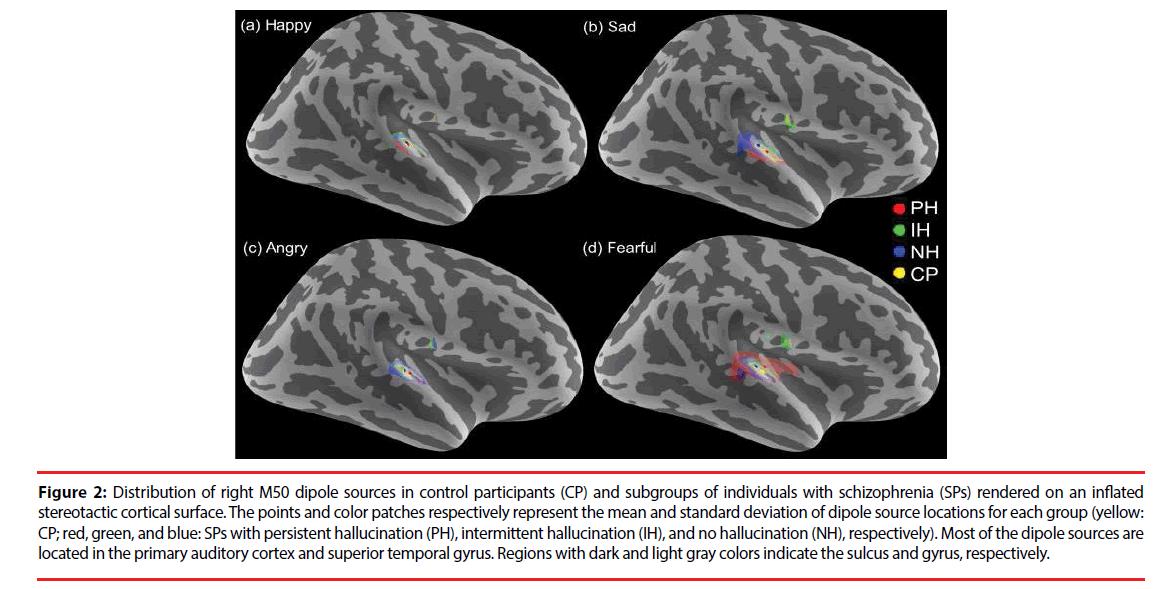

In all of the participants, 95% of the M50 and 96% of the M100 dipoles were identifiable over either hemisphere (795 for M50 and 803 for M100 ECDs from 840 cases, 840 = 84 participants x 2 hemispheres x 5 sound stimuli). The dipole sources of the M50 and M100 responses were localized in the bilateral auditory cortices and superior temporal gyri (Figure 2). No significant differences in dipole locations were observed for any of the sources in between-group or within-group comparisons.

Figure 2: Distribution of right M50 dipole sources in control participants (CP) and subgroups of individuals with schizophrenia (SPs) rendered on an inflated stereotactic cortical surface. The points and color patches respectively represent the mean and standard deviation of dipole source locations for each group (yellow: CP; red, green, and blue: SPs with persistent hallucination (PH), intermittent hallucination (IH), and no hallucination (NH), respectively). Most of the dipole sources are located in the primary auditory cortex and superior temporal gyrus. Regions with dark and light gray colors indicate the sulcus and gyrus, respectively.

Table 3 lists the peak dipole amplitudes and latencies of M50 and M100 dipole sources. M100 amplitudes in the schizophrenia group were lower than those in the CP group for all sound stimuli in both hemispheres (except for the fearful sound in the left hemisphere). ANOVA results revealed no significant differences among the three schizophrenia subgroups.

| Group | Individuals with schizophrenia (SPs) | CP | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| PH | IH | NH | |||||||||

| Dipole amplitude | Latency | Dipole amplitude | Latency | Dipole amplitude | Latency | Dipole amplitude | Latency | ||||

| (nAm) | (ms) | (nAm) | (ms) | (nAm) | (ms) | (nAm) | (ms) | ||||

| M50 | left | 16.3 ± 1.7 | 65.0 ± 1.6 | 16.5 ± 1.7 | 63.4 ± 1.6 | 12.8 ± 2.1 | 63.9 ± 2.0 | 15.0 ± 1.5 | 63.3 ± 1.4 | ||

| right | 15.1 ± 1.7 | 65.4 ± 1.7 | 15.3 ± 1.9 | 63.7 ± 2.0 | 12.0 ± 1.4 | 62.1 ± 2.2 | 13.4 ± 1.7 | 62.3 ± 1.6 | |||

| M100 | left | 9.2 ± 0.8 | 109.5 ± 2.4 | 13.3 ± 1.6 | 105.8 ± 3.1 | 12.5 ± 1.6 | 111.4 ± 2.8 | 17.2 ± 3.0* | 112.3 ± 2.5 | ||

| right | 9.9 ± 1.0 | 111.6 ± 2.5 | 13.4 ± 2.0 | 107.2 ± 3.0 | 13.0 ± 1.4 | 103.6 ± 3.0 | 16.3 ± 2.1* | 106.3 ± 1.8 | |||

| M50 | left | 15.4 ± 1.6 | 60.9 ± 2.0 | 15.4 ± 1.9 | 57.2 ± 2.0 | 17.6 ± 2.1 | 58.4 ± 1.7 | 17.6 ± 1.6 | 58.3 ± 1.5 | ||

| right | 15.1 ± 2.7 | 58.2 ± 1.7 | 17.1 ± 2.1 | 57.7 ± 1.5 | 14.3 ± 1.7 | 59.4 ± 1.9 | 14.1 ± 1.4 | 58.4 ± 1.3 | |||

| M100 | left | 12.2 ± 1.6 | 107.6 ± 2.6 | 14.4 ± 1.8 | 100.2 ± 3.2 | 14.5 ± 1.6 | 103.2 ± 2.5 | 19.9 ± 3.9* | 106.3 ± 2.6 | ||

| right | 14.2 ± 2.2 | 106.1 ± 2.2 | 15.9 ± 2.2 | 100.9 ± 2.6 | 12.3 ± 1.6 | 99.6 ± 2.8 | 19.3 ± 2.2* | 101.1 ± 1.8 | |||

| M50 | left | 15.9 ± 1.7 | 64.7 ± 1.7 | 16.9 ± 1.4 | 64.6 ± 2.0 | 17.6 ± 2.4 | 61.9 ± 2.4 | 17.0 ± 1.3 | 64.1 ± 1.8 | ||

| right | 14.8 ± 1.8 | 62.7 ± 1.6 | 17.3 ± 1.9 | 63.2 ± 1.9 | 13.6 ± 1.9 | 63.7 ± 2.2 | 14.7 ± 2.0 | 59.9 ± 1.6 | |||

| M100 | left | 13.8 ± 2.3 | 110.9 ± 3.2 | 15.3 ± 2.0 | 105.5 ± 3.0 | 13.2 ± 1.2 | 109.6 ± 2.4 | 24.5 ± 3.5** | 111.8 ± 2.4 | ||

| right | 15.2 ± 2.0 | 107.3 ± 2.7 | 15.4 ± 1.8 | 104.4 ± 2.4 | 13.4 ± 1.4 | 102.5 ± 2.4 | 25.3 ± 2.8** | 105.8 ± 1.9 | |||

| M50 | left | 17.7 ± 2.1 | 53.3 ± 2.0 | 18.4 ± 1.7 | 52.4 ± 2.0 | 19.1 ± 2.5 | 51.7 ± 1.7 | 17.7 ± 1.9 | 50.5 ± 2.4 | ||

| right | 16.2 ± 1.9 | 50.5 ± 1.5 | 16.4 ± 2.3 | 50.0 ± 2.2 | 16.5 ± 1.8 | 52.6 ± 2.1 | 14.2 ± 2.0 | 50.1 ± 1.7 | |||

| M100 | left | 12.8 ± 1.2 | 97.0 ± 2.9 | 14.7 ± 2.2 | 95.9 ± 3.7 | 17.2 ± 1.7 | 95.7 ± 2.9 | 23.6 ± 3.5** | 98.4 ± 2.5 | ||

| right | 13.9 ± 1.5 | 97.2 ± 2.7 | 14.5 ± 1.9 | 92.5 ± 2.6 | 15.5 ± 1.9 | 89.1 ± 2.7 | 24.4 ± 3.5** | 91.9 ± 1.8 | |||

| M50 | left | 17.2 ± 1.4 | 52.9 ± 2.4 | 18.8 ± 2.1 | 53.4 ± 2.3 | 15.8 ± 2.3 | 52.0 ± 2.7 | 14.9 ± 1.5 | 51.8 ± 2.2 | ||

| right | 15.2 ± 1.5 | 55.0 ± 2.3 | 16.7 ± 2.6 | 50.7 ± 2.1 | 15.2 ± 2.0 | 54.4 ± 2.5 | 14.6 ± 1.8 | 50.3 ± 2.0 | |||

| M100 | left | 13.1 ± 1.3 | 99.3 ± 2.7 | 16.5 ± 1.3 | 91.7 ± 2.8 | 13.5 ± 1.3 | 96.8 ± 2.8 | 18.5 ± 2.8 | 04.3 ± 2.7* | ||

| right | 10.7 ± 1.0 | 98.5 ± 3.0 | 12.6 ± 1.9 | 97.5 ± 3.1 | 12.2 ± 1.2 | 94.0 ± 3.2 | 17.0 ± 2.0** | 95.8 ± 1.6 | |||

| Data are presented as mean ± standard error. * p<0.05 and ** p<0.01 for significant between-group differences (SPs vs. CP) using an independent-sample 2-tailed Student's t-test. No statistically significant within-group differences were observed among the three subgroups of schizophrenia. |

|||||||||||

Table 3: Peak dipole amplitudes and latencies of auditory evoked responses to all prosody for all participants.

▪ Comparisons using the emotion-specific response index

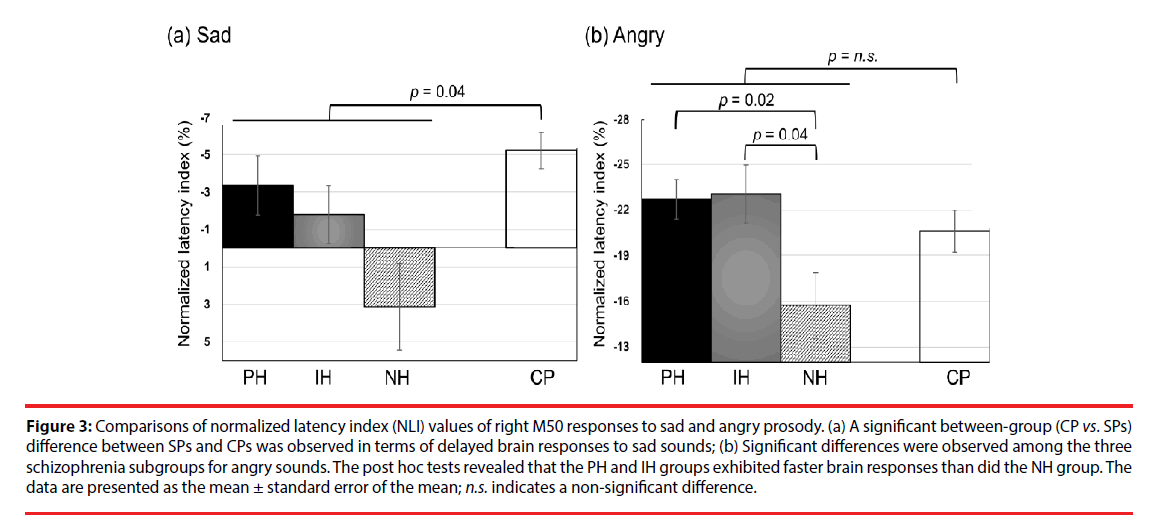

The NLI and NAI of M50 and M100 responses to emotional sounds are listed in Tables 4 and 5, respectively. We observed no significant differences in the emotion-specific amplitude index in between-group or within-group comparisons (Table 5). In summary, the schizophrenia group exhibited delayed right M50 brain responses (i.e. smaller absolute value of NLI) to the sad sound, compared to the CP group (U(77)=360, Z=2.06, p=0.04, effect size = 0.48, (Figure 3a). Kruskal-Wallis tests revealed significant differences in the right M50 brain responses to the angry sound among the three schizophrenia subgroups (χ2 (2)=8.84, p=0.012, effect size=0.74, (Figure 3b), and post hoc tests showed that the PH and IH groups exhibited faster brain responses (i.e. larger absolute NLI value) than did the NH group (Q=-2.71, p=0.02 and Q=-2.45, p=0.043, respectively). It should be noted that the absolute NLI value in response to the angry sound was approximately ten times greater than that of the sad sound, which implies that the angry stimulus has high emotional salience.

| Group | Individuals with schizophrenia (SPs) | Within-group differences | CP | Between-group differences | |||

|---|---|---|---|---|---|---|---|

| PH | IH | NH | |||||

| NLI | NLI | NLI | NLI | ||||

| Happy | |||||||

| M50 | left | -6.4 ± 2.1 | -9.4 ± 3.1 | -6.9 ± 2.2 | n.s. | -8.5 ± 1.3 | n.s. |

| right | -11.2 ± 2.3 | -8.8 ± 1.7 | -7.0 ± 1.3 | n.s. | -7.1 ± 1.0 | n.s. | |

| M100 | left | -1.8 ± 1.3 | -5.3 ± 1.2 | -6.4 ± 1.8 | n.s. | -5.5 ± 1.3 | n.s. |

| right | -4.8 ± 1.5 | -5.8 ± 1.9 | -3.7 ± 1.3 | n.s. | -5.2 ± 1.0 | n.s. | |

| Sad | |||||||

| M50 | left | -1.4 ± 1.4 | 0.2 ± 2.2 | -2.2 ± 2.0 | n.s. | 0.5 ± 1.8 | n.s. |

| right | -3.4 ± 1.6 | -1.8 ± 1.5 | 3.2 ± 2.4 | n.s. | -5.2 ± 1.1 | CP<SPs* | |

| M100 | left | 1.7 ± 2.1 | 0.8 ± 2.2 | -1.0 ± 2.5 | n.s. | -0.6 ± 1.5 | n.s. |

| right | -3.7 ± 1.6 | -2.0 ± 2.3 | -0.5 ± 1.7 | n.s. | -0.2 ± 1.1 | n.s. | |

| Angry | |||||||

| M50 | left | -18.3 ± 1.7 | -17.3 ± 2.5 | -17.9 ± 2.2 | n.s. | -21.2 ± 3.1 | n.s. |

| right | -22.7 ± 1.3 | -23.1 ± 1.9 | -15.6 ± 2.2 | PH<NH*;IH<NH* | -20.6 ± 1.4 | n.s. | |

| M100 | left | -11.3 ± 2.6 | -10.8 ± 3.6 | -14.1 ± 1.8 | n.s. | -12.4 ± 1.8 | n.s. |

| right | -12.8 ± 1.5 | -13.3 ± 1.7 | -14.0 ± 0.7 | n.s. | -13.8 ± 1.0 | n.s. | |

| Fearful | |||||||

| M50 | left | -18.3 ± 2.6 | -16.2 ± 2.3 | -18.9 ± 2.3 | n.s. | -19.7 ± 2.1 | n.s. |

| right | -16.9 ± 2.2 | -21.2 ± 1.7 | -12.9 ± 2.6 | n.s. | -20.5 ± 2.0 | n.s. | |

| M100 | left | -10.2 ± 2.0 | -11.7 ± 3.2 | -13.5 ± 2.2 | n.s. | -7.4 ± 1.4 | n.s. |

| right | -12.3 ± 1.7 | -9.2 ± 1.2 | -9.5 ± 1.5 | n.s. | -9.6 ± 0.8 | n.s. | |

| Data are presented as mean ± standard error. * p < 0.05 for significant difference; n.s. indicates non-significant differences. Within-group differences among the three subgroups of schizophrenia were evaluated using Dunn's nonparametric comparisons for post hoc testing after the Kruskal-Wallis test. Between-group differences (SPs vs. CP) were evaluated using the Mann-Whitney U-test. |

|||||||

Table 4: Normalized latency index (NLI) of M50 and M100 responses to emotional prosody.

| Group | Individuals with schizophrenia (SPs) | Within-group differences | CP | Between-group differences | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| PH | IH | NH | NAI | ||||||||||

| NAI | NAI | NAI | |||||||||||

| Happy | |||||||||||||

| M50 | left | 24.5 ± 24.6 | 1.4 ± 12.2 | 83.3 ± 35.4 | n.s. | 18.2 ± 8.3 | n.s. | ||||||

| right | 11.4 ± 14.9 | 34.2 ± 19.3 | 27.3 ± 13.3 | n.s. | 32.0 ± 17.5 | n.s. | |||||||

| M100 | left | 48.3 ± 27.7 | 26.0 ± 25.0 | 46.8 ± 21.5 | n.s. | 28.0 ± 14.3 | n.s. | ||||||

| right | 58.0 ± 25.5 | 35.9 ± 20.1 | 7.5 ± 13.3 | n.s. | 21.8 ± 7.7 | n.s. | |||||||

| Sad | |||||||||||||

| M50 | left | 12.2 ± 14.2 | 42.1 ± 32.1 | 50.8 ± 13.9 | n.s. | 21.7 ± 11.4 | n.s. | ||||||

| right | -2.7 ± 73 | 38.3 ± 15.7 | 21.4 ± 11.1 | n.s. | 20.0 ± 13.0 | n.s. | |||||||

| M100 | left | 53.1 ± 29.0 | 21.7 ± 151 | 41.9 ± 23.6 | n.s. | 55.6 ± 16.1 | n.s. | ||||||

| right | 94.1 ± 33.1 | 48.5 ± 21.9 | 19.1± 13.1 | n.s. | 77.2 ±25.2 | n.s. | |||||||

| Angry | |||||||||||||

| M50 | left | 27.0 ± 15.8 | 31.8 ± 18.5 | 82.9 ± 31.9 | n.s. | 36.8 ± 28.3 | n.s. | ||||||

| right | 17.7 ± 9.7 | 18.5 ± 10.8 | 77.5 ± 39.1 | n.s. | 15.9 ± 12.2 | n.s. | |||||||

| M100 | left | 54.0 ± 15.6 | 24.8 ± 17.8 | 85.7 ± 30.9 | n.s. | 53.8 ± 18.0 | n.s. | ||||||

| right | 62.1 ± 24.9 | 35.7 ± 22.6 | 41.0 ± 19.7 | n.s. | 56.5 ± 16.9 | n.s. | |||||||

| Fearful | |||||||||||||

| M50 | left | 36.0 ± 38.0 | 21.4 ± 16.5 | 56.8 ± 39.1 | n.s. | -3.0 ± 9.7 | n.s. | ||||||

| right | 18.1 ± 11.5 | 18.2 ± 16.3 | 43.6 ± 22.4 | n.s. | 42.5 ± 28.8 | n.s. | |||||||

| M100 | left | 36.6 ± 17.7 | 54.0 ± 23.2 | 45.7 ± 30.0 | n.s. | 8.3 ± 6.8 | n.s. | ||||||

| right | 29.3 ± 21.2 | 26.0 ± 35.0 | 14.5 ± 12.3 | n.s. | 10.8 ± 11.6 | n.s. | |||||||

| Data are presented as mean ± standard error. No statistically significant between-group and within-group differences were found. PH: persistent hallucinating; IH: intermittent hallucinating; NH: non-hallucinating; CP: control participants; n.s. indicates non-significant differences. |

|||||||||||||

Table 5: Normalized amplitude index (NAI) of M50 and M100 responses to emotional prosody.

Figure 3: Comparisons of normalized latency index (NLI) values of right M50 responses to sad and angry prosody. (a) A significant between-group (CP vs. SPs) difference between SPs and CPs was observed in terms of delayed brain responses to sad sounds; (b) Significant differences were observed among the three schizophrenia subgroups for angry sounds. The post hoc tests revealed that the PH and IH groups exhibited faster brain responses than did the NH group. The data are presented as the mean ± standard error of the mean; n.s. indicates a non-significant difference.

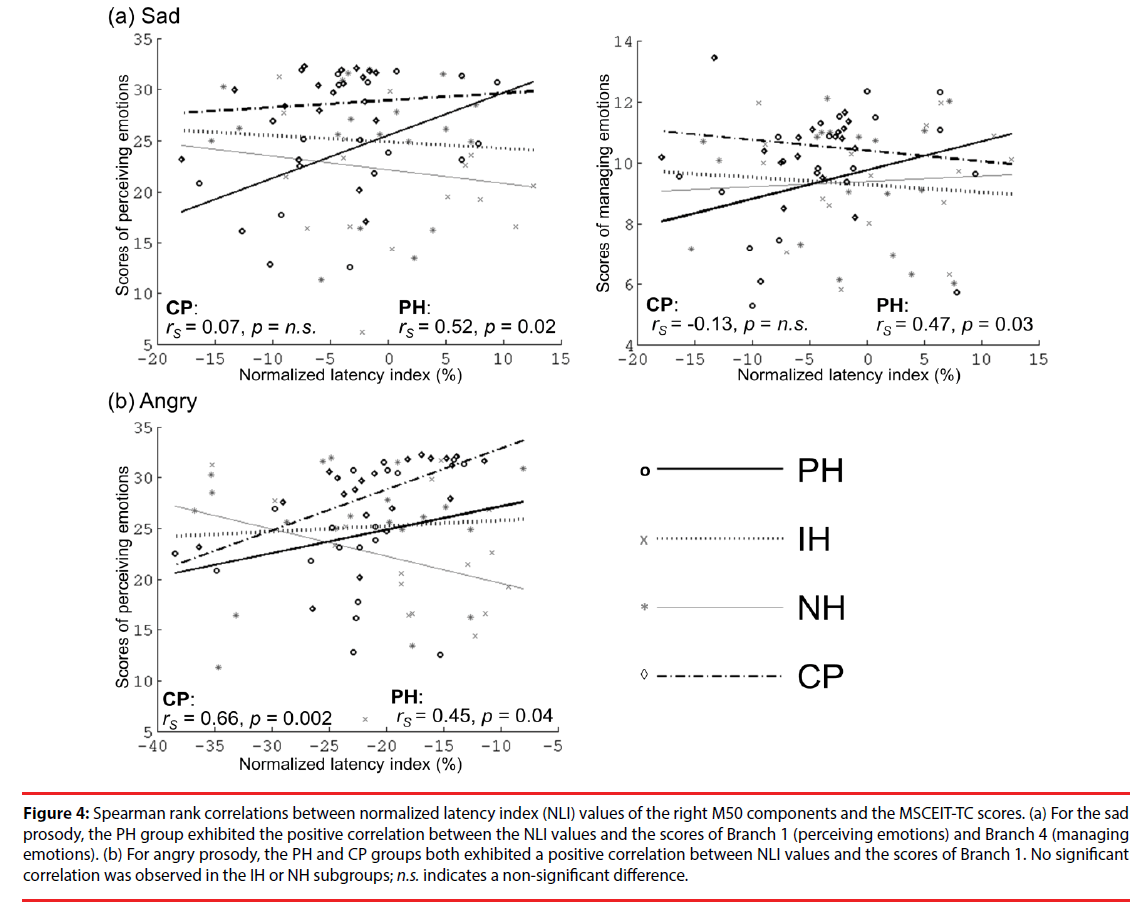

▪ Correlations between brain responses and EI performance

Figure 4 presents the correlations between the values of the right M50 NLI for sad and angry sounds and the MSCEIT-TC scores. For sad sounds, the values of the right M50 NLI in the PH group were positively correlated with those of Branch 1 (rs=0.52, p=0.02) and Branch 4 (rs=0.47, p=0.03). For angry sounds, the values of the right M50 NLI in the PH and CP groups were positively correlated with those of Branch 1 (rs=0.45, p=0.04; rs=0.66, p=0.002, respectively). This is an indication that participants with faster brain responses to negative emotions (as indexed by NLI) have poor EI in the perception or management of emotions. No significant correlations between EI performance and brain responses to happy or fear sounds were observed.

Figure 4: Spearman rank correlations between normalized latency index (NLI) values of the right M50 components and the MSCEIT-TC scores. (a) For the sad prosody, the PH group exhibited the positive correlation between the NLI values and the scores of Branch 1 (perceiving emotions) and Branch 4 (managing emotions). (b) For angry prosody, the PH and CP groups both exhibited a positive correlation between NLI values and the scores of Branch 1. No significant correlation was observed in the IH or NH subgroups; n.s. indicates a non-significant difference.

Discussion

In this study, we used emotion-specific indices to analyze early auditory brain responses, in conjunction with an implicit task of emotion prosodic perception to reveal the intricate interactions in the early processing of emotion prosody and AH and EI performance in SPs. Overall, the SPs in this study presented deficits in the early perceptual processing of negative emotions (i.e., sad), compared to CPs. Deficits in the PH group were also shown to be associated with impaired downstream social cognition, as indexed by EI. Our results revealed that previous and/or concurrent AH may, as a trait-marker, hamper the processing of negative emotions, such as sadness. Nevertheless, active AH (i.e., PH/IH vs. NH) may, as a state-marker, accelerate the processing of negative emotions with high arousal potential (i.e., angry) as a compensatory neural predisposition. Nonetheless, in the context of persistent hallucinations (PH group), these types of adaptive mechanism do not necessarily function optimally in the perceptual processing of negative emotions, which may in turn impair social cognition.

In the present study, the overall attenuation of M100 responses in SPs (vs. CPs) to prosodic stimuli was consistent with those in previous reports [41-43]. Subdued central auditory responses such as these may be attributed to increased noisiness in the brain [44], a low ceiling for auditory neural entrainment, and limited systemic capacity for the integration of incoming stimuli [45]. This would imply dysfunction in early sensory processing at the level of the auditory cortex in SPs. Despite an overall reduction in the auditory M100 amplitudes, it is possible that the normalized amplitude index (NAI) is not a predictive measure by which to assess the early perceptual processing of emotions, due to substantial between-subject variability large standard errors in (Table 5).

Angry sounds are perceived as negative or social threat-related stimuli [46,47], and may therefore capture the subject’s attention much more readily than do other prosody sounds [48]. SPs experiencing innately negative AH (either PH or IH) who are bias-prone toward negative emotions [49] would therefore be expected to undergo an accelerated response to angry sounds.

In normal situations, high-salience stimuli (i.e., angry sounds) engages top-down attention circuitry (prefrontal cortex) [49] and bottomup emotion circuitry (amygdala) [50] for high-level EI [51]. In the CPs, we observed a positive correlation between the latency of brain responses to high-salience angry sounds and EI performance; i.e., slower brain responses are associated with better EI performance. Faster brain responses to angry sounds (vs. neutral ones) may be an indication of inherent attentiveness toward negative emotions [48]. After all, rapid detection of sociobiological threating cues (with or without a high number of false-alarms) is beneficial for survival [52], and may affect higher-order social cognition [53]. It should be noted that the PHs retained the operational mechanism of CPs as a compensatory reaction. Nonetheless, it is plausible that the rigidity of aberrant information processing [49,54] (e.g., attention bias with misattributions of negative emotions and deranged early perceptual processing) [55] in actively hallucinating SPs may eventually lead to abnormal neurocognition, as expressed in poor EI performance in the PH group [56].

It is intriguing that only the PH group displayed a correlation between brain responses to negative emotions (i.e., sad/angry sounds) and EI performance; i.e., neither the IH nor NH groups presented this correlation. From the perspective of the theory of stochastic resonance, the ability to detect external signals may be enhanced by an increase in the amount of noise in a neuronal system [57]. Previous studies reported that hallucinating SPs presented elevated levels of cortical noise during the processing of information [44] as well as resting hyperactivity in the auditory cortex [16,58]. Thus, we speculate that PHs (vs. NHs/IHs and controls) is prone to higher background noise (task-irrelevant neural activity) and faster perceptual processing of negative emotions as well as the misattribution of emotional salience.

It should be mentioned that all of the SPs in the present study demonstrated deficits in perceptual brain responses to sadness (vs. controls), which is consistent with other studies [18,59]. The sad sound features low pitch variability, a relatively low base frequency, low mean voice intensity, and slow speech rate [60,61]. These attributes should render sad sounds as signals of reduced salience. Previous studies have reported significant deficits in the prosodic perception of sad stimuli in SPs [59,62], which could compromise accuracy in the identification of sad sounds [63]. Our findings of delayed brain responses to sad sound in all schizophrenia groups (for past and/or concurrent AH) suggest that a reduction in the ability to perceptually process less emotionally salient environmental stimuli may be a characteristic trait of schizophrenia.

There are a few points that require further consideration. Firstly, we did not request the participants to actively identify the valence or arousal level of each sound. The implicit paradigm of the current study was meant to emulate their day to day lives, which is a preferable experimental setting when investigating the interactive processes involved in emotion perception and social cognition in SPs. Secondly, all of the SPs in this study were regularly taking psychotropic medications. Nonetheless, most of the patients were prescribed atypical antipsychotics (19/21 in PH subgroup, 19/21 in IH subgroup and 20/21 in NH subgroup), which means that the confounding effects of the medication can probably be discounted. Finally, the fact that the three schizophrenia subgroups achieved similar PANSS-depression scores precludes the possibility of psychological bias due to fluctuations in mood.

Conclusion

This paper presents a novel analytical approach to the estimation of emotion-specific brain responses. Our results revealed impairments in the early perceptual processing of one negative emotion (sadness) by SPs compared to CPs. Furthermore, it appears that dysfunction in the processing of negative emotions is a characteristic trait of schizophrenia, regardless of whether the individual experiences AH. Ongoing AH may invoke a predilection toward negative emotions with high arousal potential (e.g., anger), which may be associated with deficiencies in perceptual processing and dysfunction in social cognition. Our findings imply that deciphering the phenotypes of SPs based on the severity of AH (e.g., persistent/intermittent vs. remitted/ none AH) and EI performance may have important implications for subsequent studies on schizophrenia, due to differences in the early perceptual processing of emotional sounds and EI among the subtypes of SPs.

Acknowledgments

The authors thank Chou-Ming Cheng, Yung- Ling Tseng, Ruei-Jyun Hung, and Jin-Jie Hung for their assistance with data collection, and Ian- Ting Chu for her contribution to the data bank of emotional prosody.

Funding

This research was supported by National Health Research Institutes, Taiwan (pH-101-PP-48 and pH-102-PP-43); Taipei Veteran General Hospital (V103E3 and V105C-095); and Tri-Service General Hospital (TSGH-C101-124).

Ethics approval and consent to participate

This study was approved by Institutional Review Board of Taipei Veteran General Hospital (Permit Number: VGHTPE-IRB-2014-02- 009A) and Tri-Service General Hospital (Permit Number: TSGHIRB-100-05-082).

Conflict of Interest Statement

All of the authors declare that they have no competing financial interests.

References

- Hugdahl K, Loberg EM, Specht K, et al. Auditory hallucinations in schizophrenia, the role of cognitive, brain structural and genetic disturbances in the left temporal lobe. Front. Hum. Neurosci 1(6), 1-10 (2008).

- Casarotti H. The hallucination, a perception deficit. Vertex 20(85), 200-205 (2009).

- Daalman K, Verkooijen S, Derks EM, et al. The influence of semantic top-down processing in auditory verbal hallucinations. Schizophr. Res 139(1-3), 82-86 (2012).

- Waters F, Allen P, Aleman A, et al. Auditory hallucinations in schizophrenia and nonschizophrenia populations, a review and integrated model of cognitive mechanisms. Schizophr. Bull 38(4), 683-693 (2012).

- Alba-Ferrara L, Fernyhough C, Weis S, et al. Contributions of emotional prosody comprehension deficits to the formation of auditory verbal hallucinations in schizophrenia. Clin. Psychol. Rev 32(4), 244-250 (2012).

- Copolov DL, Mackinnon A, Trauer T. Correlates of the affective impact of auditory hallucinations in psychotic disorders. Schizophr. Bull 30(1), 163-171 (2004).

- Couture SM, Penn DL, Roberts DL. The functional significance of social cognition in schizophrenia, a review. Schizophr. Bull 32(1), S44-63 (2006).

- Poole JH, Tobias FC, Vinogradov S. The functional relevance of affect recognition errors in schizophrenia. J. Int. Neuropsychol. Soc 6(6), 649-658 (2000).

- Hooker C, Park S. Emotion processing and its relationship to social functioning in schizophrenia patients. Psychiatry. Res 112(1), 41-50 (2002).

- Phillips ML, Drevets WC, Rauch SL, et al. Neurobiology of emotion perception II, Implications for major psychiatric disorders. Biol. Psychiatry 54(5), 515-528 (2003).

- Javitt DC, Sweet RA. Auditory dysfunction in schizophrenia, integrating clinical and basic features. Nat. Rev. Neurosci 16(9), 535-550 (2015).

- Pinheiro AP, Rezaii N, Rauber A, et al. Abnormalities in the processing of emotional prosody from single words in schizophrenia. Schizophr. Res 152(1), 235-241 (2014).

- Jarkiewicz M, Wichniak A. Can new paradigms bring new perspectives for mismatch negativity studies in schizophrenia? Neuropsychiatric. Electrophysiology 1(1), 12 (2015).

- Oades RD, Wild-Wall N, Juran SA, et al. Auditory change detection in schizophrenia, sources of activity, related neuropsychological function and symptoms in patients with a first episode in adolescence, and patients 14 years after an adolescent illness-onset. BMC Psychiatry 6, 7 (2006).

- Fisher DJ, Smith DM, Labelle A, et al. Attenuation of mismatch negativity (MMN) and novelty P300 in schizophrenia patients with auditory hallucinations experiencing acute exacerbation of illness. Biol. Psychol 100, 43-49 (2014).

- Kompus K, Westerhausen R, Hugdahl K. The "paradoxical" engagement of the primary auditory cortex in patients with auditory verbal hallucinations, a meta-analysis of functional neuroimaging studies. Neuropsychologia 49(12), 3361-3369 (2011).

- Li B, Cui LB, Xi YB, et al. Abnormal Effective Connectivity in the Brain is Involved in Auditory Verbal Hallucinations in Schizophrenia. Neurosci. bull 33(3), 281-291 (2017).

- Rossell SL, Boundy CL. Are auditory-verbal hallucinations associated with auditory affective processing deficits? Schizophr. Res 78(1), 95-106 (2005).

- Penn DL, Corrigan PW, Bentall RP, et al. Social cognition in schizophrenia. Psychol. Bull 121(1), 114-132 (1997).

- Lancaster RS, Evans JD, Bond GR, et al. Social cognition and neurocognitive deficits in schizophrenia. J. Nerv. Ment. Dis 191(5), 295-299 (2003).

- Kee KS, Horan WP, Salovey P, et al. Emotional intelligence in schizophrenia. Schizophr. Res 107(1), 61-68 (2009).

- Eack SM, Greeno CG, Pogue-Geile MF, et al. Assessing social-cognitive deficits in schizophrenia with the Mayer-Salovey-Caruso Emotional Intelligence Test. Schizophr. Bull 36(2), 370-380 (2010).

- Wojtalik JA, Eack SM, Keshavan MS. Structural neurobiological correlates of Mayer-Salovery-Caruso Emotional Intelligence Test performance in early course schizophrenia. Prog. Neuropsychopharmacol. Biol. Psychiatry 40, 207-212 (2013).

- Mao WC, Chen LF, Chi CH, et al. Traditional Chinese version of the Mayer Salovey Caruso Emotional Intelligence Test (MSCEIT-TC), Its validation and application to schizophrenic individuals. Psychiatry. Res 243, 61-70 (2016).

- Green MF, Bearden CE, Cannon TD, et al. Social cognition in schizophrenia, Part 1, performance across phase of illness. Schizophr. Bull 38(4), 854-864 (2012).

- O'Reilly K, Donohoe G, Coyle C, et al. Prospective cohort study of the relationship between neuro-cognition, social cognition and violence in forensic patients with schizophrenia and schizoaffective disorder. BMC. Psychiatry 15, 155 (2015).

- American Psychiatric Association (ed.), Diagnostic and statistical manual of mental disorders (DSM-IV -TR), 4th edn. Washington, D.C., Authors; (2000).

- Kay SR, Fiszbein A, Opler LA. The positive and negative syndrome scale (PANSS) for schizophrenia. Schizophr. Bull 13(2), 261-276 (1987).

- Haddock G, McCarron J, Tarrier N, et al. Scales to measure dimensions of hallucinations and delusions, the psychotic symptom rating scales (PSYRATS). Psychol. Med 29(4), 879-889 (1999).

- Maccann C, Joseph DL, Newman DA, et al. Emotional intelligence is a second-stratum factor of intelligence, Evidence from hierarchical and bifactor models. Emotion 14(2), 358-374 (2014).

- Mayer JD, Roberts RD, Barsade SG. Human abilities, emotional intelligence. Annual. Review. psychology 59, 507-536 (2008).

- Chu IT. Primary dysmenorrhea alters the perceptual processing of emotional prosody, a MEG study. Thesis. Taipei, Taiwan, National Yang-Ming University; (2013).

- Dale AM, Fischl B, Sereno MI. Cortical surface-based analysis. I. Segmentation and surface reconstruction. Neuroimage 9(2), 179-194 (1999).

- Hamalainen M, Hari R, Ilmoniemi RJ, et al. Magneto encephalography-theory, instrumentation, and its applications to noninvasive studies of working human brain. Rev. Mod. Phys 65(2), 413-497 (1993).

- Hamalainen MS, Sarvas J. Realistic conductivity geometry model of the human head for interpretation of neuromagnetic data. IEEE. Trans. Biomed. Eng 36(2), 165-171 (1989).

- Oram Cardy JE, Ferrari P, Flagg EJ, et al. Prominence of M50 auditory evoked response over M100 in childhood and autism. Neuroreport 15(12), 1867-1870 (2004).

- Li LP, Shiao AS, Chen KC, et al. Neuromagnetic index of hemispheric asymmetry prognosticating the outcome of sudden hearing loss. PLoS. One 7(4), e35055 (2012).

- Alba-Ferrara L, Hausmann M, Mitchell RL, et al. The neural correlates of emotional prosody comprehension, disentangling simple from complex emotion. PLoS. One 6(12), e28701 (2011).

- Mitchell RLC, Elliott R, Barry M, et al. The neural response to emotional prosody, as revealed by functional magnetic resonance imaging. Neuropsychologia 41(10), 1410-1421 (2003).

- Cohen BH. Explaining Psychological Statistics. Hoboken, New Jersey, John Wiley & Sons; (2008).

- Rosburg T, Boutros NN, Ford JM. Reduced auditory evoked potential component N100 in schizophrenia--a critical review. Psychiatry. Res 161(3), 259-274 (2008).

- Turetsky BI, Bilker WB, Siegel SJ, et al. Profile of auditory information-processing deficits in schizophrenia. Psychiatry. Res 165(1-2), 27-37 (2009).

- Pinheiro AP, Del Re E, Mezin J, et al. Sensory-based and higher-order operations contribute to abnormal emotional prosody processing in schizophrenia, an electrophysiological investigation. Psychol. Med 43(3), 603-618 (2013).

- Winterer G, Ziller M, Dorn H, et al. Schizophrenia, reduced signal-to-noise ratio and impaired phase-locking during information processing. Clin. Neurophysiol 111(5), 837-849 (2000).

- Gilmore CS, Clementz BA, Buckley PF. Rate of stimulation affects schizophrenia-normal differences on the N1 auditory-evoked potential. Neuroreport 15(18), 2713-2717 (2004).

- Mothes-Lasch M, Becker MP, Miltner WH, et al. Neural basis of processing threatening voices in a crowded auditory world. Soc. Cogn. Affect. Neurosci 11(5), 821-828 (2016).

- Mothes-Lasch M, Mentzel HJ, Miltner WH, et al. Visual attention modulates brain activation to angry voices. J. Neurosci 31(26), 9594-9598 (2011).

- Aue T, Cuny C, Sander D, et al. Peripheral responses to attended and unattended angry prosody, a dichotic listening paradigm. Psychophysiology 48(3), 385-392 (2011).

- Alba-Ferrara L, de Erausquin GA, Hirnstein M, et al. Emotional prosody modulates attention in schizophrenia patients with hallucinations. Front. Hum. Neurosci 7, 59 (2013).

- Sander D, Grandjean D, Pourtois G, et al. Emotion and attention interactions in social cognition, brain regions involved in processing anger prosody. Neuroimage 28(4), 848-858 (2005).

- Salovey P, Mayer JD. Emotional-Intelligence. 185-231 (1990).

- Van Damme S, Crombez G, Notebaert L. Attentional bias to threat, a perceptual accuracy approach. Emotion 8(6), 820-827 (2008).

- Mehta UM, Thirthalli J, Bhagyavathi HD, et al. Similar and contrasting dimensions of social cognition in schizophrenia and healthy subjects. Schizophr. Res 157(1-3), 70-77 (2014).

- Premkumar P, Cooke MA, Fannon D, et al. Misattribution bias of threat-related facial expressions is related to a longer duration of illness and poor executive function in schizophrenia and schizoaffective disorder. Eur. Psychiatry 23(1), 14-19 (2008).

- Jang SK, Park SC, Lee SH, et al. Attention and memory bias to facial emotions underlying negative symptoms of schizophrenia. Cogn. Neuropsychiatry 21(1), 45-59 (2016).

- Fett AK, Viechtbauer W, Dominguez MD, et al. The relationship between neurocognition and social cognition with functional outcomes in schizophrenia, a meta-analysis. Neurosci. Biobehav. Rev 35(3), 573-588 (2011).

- Winterer G, Ziller M, Dorn H, et al. Cortical activation, signal-to-noise ratio and stochastic resonance during information processing in man. Clin. Neurophysiol 110(7), 1193-1203 (1999).

- Kompus K, Falkenberg LE, Bless JJ, et al. The role of the primary auditory cortex in the neural mechanism of auditory verbal hallucinations. Front. Hum. Neurosci 7, 144 (2013).

- Bozikas VP, Kosmidis MH, Anezoulaki D, et al. Impaired perception of affective prosody in schizophrenia. J. Neuropsychiatry. Clin. Neurosci 18(1), 81-85 (2006).

- Leitman DI, Laukka P, Juslin PN, et al. Getting the cue, sensory contributions to auditory emotion recognition impairments in schizophrenia. Schizophr. Bull 36(3), 545-556 (2010).

- Petkova E, Lu F, Kantrowitz J, et al. Auditory tasks for assessment of sensory function and affective prosody in schizophrenia. Compr. Psychiatry 55(8), 1862-1874 (2014).

- Edwards J, Pattison PE, Jackson HJ, et al. Facial affect and affective prosody recognition in first-episode schizophrenia. Schizophr. Res 48(2-3), 235-253 (2001).

- Ramos-Loyo J, Mora-Reynoso L, Sanchez-Loyo LM, et al. Sex differences in facial, prosodic, and social context emotional recognition in early-onset schizophrenia. Schizophr. Res. Treat 2012, 584725 (2012).